The Chat Interface That Changed the World: How ChatGPT Came to Be

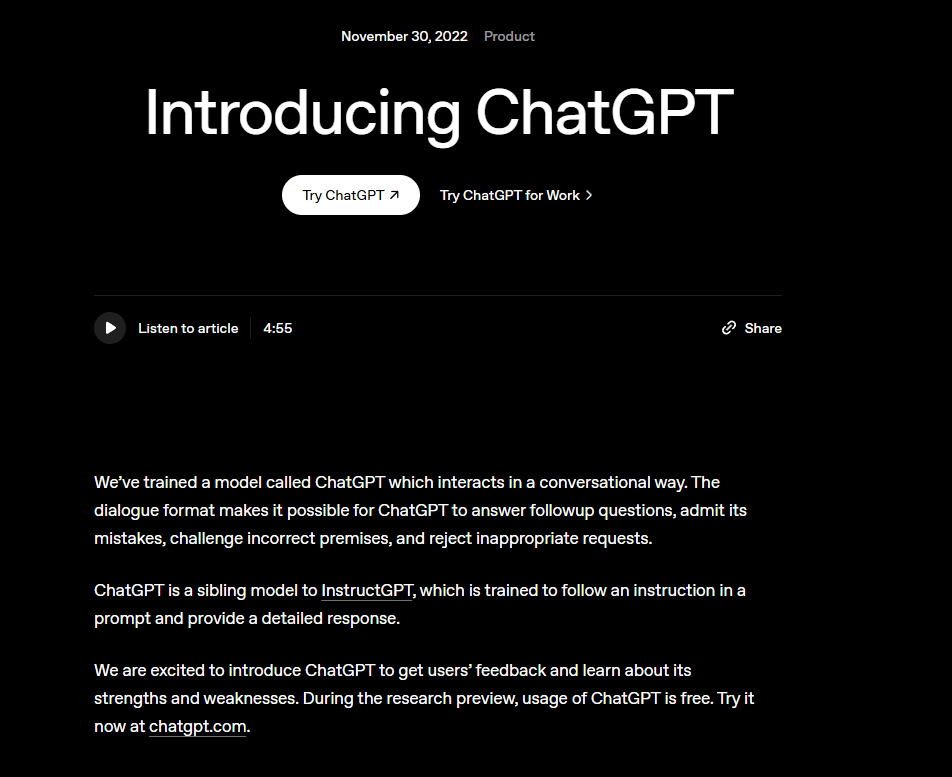

At the end of 2022, many people experienced a rather unusual sensation when they opened ChatGPT for the first time.

It did not resemble a search engine, nor a voice assistant, and still less the earlier generation of chatbots that became tedious after only a few exchanges. You could ask it a question and it would answer; if its explanation seemed insufficiently clear, it could continue elaborating; if you asked it to adopt a different tone, it would genuinely do so; if you pointed out an error, it might even rewrite the response in accordance with your correction.

From that moment onward, AI truly began to move out of papers, laboratories, and technology headlines, becoming something ordinary people could use directly in everyday life. When many people later recalled their first experience with ChatGPT, they reached for a strikingly similar word: astonishment.

This astonishment certainly arose from the model’s capabilities, but it cannot be reduced to capabilities alone.

The more important question has never been why ChatGPT was so powerful, but rather: why did so many technologies that had already existed suddenly, in 2022, converge into something shaped like ChatGPT?

The conclusion can be stated upfront.

ChatGPT was not a product invented overnight by some exceptionally gifted team. It was, rather, the outcome of four technological trajectories converging at the same moment: Transformer expanded the upper bound of language models, large-scale training drove qualitative capability jumps, training with human feedback reshaped the model into an assistant, and a seemingly simple chat interface transformed all of this into a product that ordinary people could use immediately.

In other words, ChatGPT’s breakout was not merely a model upgrade.

It was also the result of technology, engineering, and product judgment all arriving at the right point simultaneously.

ChatGPT Did Not Begin with Chat, but with Transformer

When many people first encountered ChatGPT, they naturally understood it as an exceptionally intelligent chatbot.

That interpretation, however, understates what it is.

The prehistory of ChatGPT began not with conversation, but with the underlying architecture of large language models.

In 2017, Google introduced the Transformer architecture. In retrospect, this was one of the most important starting points of the entire era of large models. The architecture was built entirely on attention mechanisms, abandoning recurrent and convolutional structures altogether, thereby greatly improving parallelization and training efficiency.

Its most important significance lies not merely in making model training more effective, but in making it possible to continue scaling models upward.

This may sound less dramatic than other milestones, yet its influence was extraordinarily far-reaching.

Before that point, machines could of course process language, perform translation, answer questions, and generate text, but these capabilities were difficult to unify within a single technical path that was both sufficiently powerful and sufficiently scalable. The arrival of Transformer was equivalent to providing the later GPT series, large language models, and ultimately ChatGPT with a structural beam capable of bearing their weight.

It did not directly invent “dialogue.”

But it provided, for the first time, the conditions in which everything that followed could grow.

Without Transformer, ChatGPT would very likely not look the way it does today. It would not be this large, this general, or this much like a tool with which one can discuss almost anything.

What Truly Excited the Field Was GPT-3’s Demonstration That Scale Can Produce Qualitative Breakthroughs

Transformer solved the problem of whether models could be made large.

GPT-3 addressed what would happen once they were.

In 2020, OpenAI released GPT-3. With 175 billion parameters, it represented an astronomical scale for its time. Yet what truly shook the field was not simply its size, but the generality it exhibited. The same model, without task-specific fine-tuning, could produce credible results across translation, question answering, rewriting, code generation, and a wide range of other tasks.

The GPT-3 paper summarized this capacity as few-shot / in-context learning.

Language Models are Few-Shot Learners

The significance of this development extended far beyond the fact that the model had become stronger.

It changed how people imagined AI.

Before GPT-3, many people’s understanding of AI still centered on building a separate model for each task. Translation had translation models, recommendation had recommendation models, and speech recognition had speech recognition models. The dominant assumption was that models became more useful by becoming stronger at isolated tasks.

GPT-3 offered an entirely different answer: perhaps it was unnecessary to decompose the world into countless small tasks and teach them to models one by one. Perhaps it was enough to train a sufficiently large general-purpose model, which would, in the course of scale expansion, develop abilities that had previously required specialized systems.

It was also during this period that Scaling Laws increasingly became accepted as an industry-wide consensus.

In Scaling Laws for Neural Language Models, OpenAI argued that increases in model size, data scale, and compute produce predictable improvements in performance, and that these improvements follow power-law trends across a broad range.

Scaling Laws for Neural Language Models

In retrospect, GPT-3 was important not merely because it was powerful.

It was important because it led the industry, for the first time, to genuinely believe this: the path toward stronger AI might indeed be opened by continuing to scale models upward.

From GPT to ChatGPT, the Missing Piece Was Not More Scale, but Better Alignment with Human Intent

And this was precisely where the problem emerged.

GPT-3 was powerful, but it was not easy to use.

It could produce striking sentences and deliver results beyond expectation on many tasks. But it could also wander off topic, misread instructions, and fabricate nonsense with complete confidence. Much of the time, one was not so much using it as probing it, guessing at it, and adapting oneself to it.

It resembled a powerful language engine more than a genuine assistant capable of working with you.

That was the central threshold that had to be crossed before ChatGPT could appear.

What OpenAI added later was not greater scale, but a way of turning the model into the kind of tool people actually wanted. The core of this shift was InstructGPT and RLHF, or reinforcement learning from human feedback.

The InstructGPT paper explicitly pointed out that simply scaling up language models does not naturally improve their ability to follow user intent.

Training language models to follow instructions with human feedback

The basic idea is not difficult to describe.

First, humans write better answers; then humans compare multiple answers and indicate which one is preferable; finally, those preferences are used in reverse to train the model. What the model gradually learns is no longer only how language should continue, but what kinds of responses human beings actually prefer.

At first glance, this may seem like nothing more than a slight adjustment in style.

But it was not.

It was a change of role.

From that point onward, the model’s goal was no longer simply to generate a plausible passage of text, but to understand human intention as fully as possible, respond to human needs, and proceed in accordance with human ways of working.

This was the most important step in the transition from GPT to ChatGPT.

Parameters determine the upper bound of capability.

Alignment determines the lower bound of usability.

The ability to speak is not the same as the ability to help.

And ChatGPT’s critical breakthrough lay precisely in that word: help.

What May Truly Have Changed the World Was Not the Model, but the Chat Interface

Even at this point, the technological story was still incomplete.

The AI field has never lacked impressive papers, nor demonstrations produced in laboratories. What has truly been scarce is a product form that ordinary people can immediately understand, immediately use, and immediately recognize as useful.

One underestimated reason why ChatGPT erupted in 2022 is therefore this:

OpenAI gave it the simplest, yet also the most powerful, product shell possible: a chat interface.

There was no developer console, no complicated configuration, no specialist barrier, and no long list of operational logic that users had to learn in advance.

You only had to type a sentence.

It would take it from there.

The interaction looks almost too simple, simple enough to make its importance easy to overlook.

You did not need to understand Transformer, parameter scale, or reinforcement learning. You did not even need to know what the phrase “large language model” meant. You only had to speak to it as you normally would and hand the question over.

And it caught it.

That is why, when many people were first stunned by ChatGPT, it was not because they had understood the papers, learned the benchmarks, or been told by technology media that “a new era had arrived.”

It was because the moment itself was so direct.

You said one sentence to a chat interface, and it genuinely continued in the direction you intended.

It was not merely returning a result.

It was participating.

This is the point at which ChatGPT most truly feels like an invention.

Later Upgrades Added Not More Magic, but More Practical Utility

If the most compelling aspect of ChatGPT in 2022 was that it felt like magic,

then the work of later generations of models was to gradually refine that magic into a tool.

What GPT-4 brought was not only more polished answers, nor merely a more mature writing style. What it added was stability in complex tasks, somewhat stronger reasoning, and greater reliability across a wider range of scenarios.

The GPT-4 technical report defined GPT-4 as a large-scale multimodal model that can accept image and text inputs and produce text outputs, marking the point at which ChatGPT began to move from “being able to chat” toward “being better able to handle complex tasks.”

Later still, multimodality became the new direction.

Models no longer processed only text; they began to see images, hear audio, and support more natural forms of interaction.

On the GPT-4o launch page, OpenAI described it as a model in which text, image, and audio capabilities are integrated more natively. This further suggests that what ChatGPT aims to become is not merely a text-based chatbot, but a more general digital interface.

It can write, see, hear, call tools, and enter human workflows.

It no longer merely answers questions.

It has begun to help people accomplish things.

In this sense, later upgrades to ChatGPT were not simply intended to make it more human-like, but to make it more like a durable tool.

Stronger reasoning reduces mistakes.

Longer context windows make it possible to handle more complete tasks.

Multimodal capability prevents it from being confined to the world of text alone.

Software in the past required human beings to learn how to use it.

Large-model tools are now beginning, in turn, to learn human language.

This is not merely a change in products.

It is a change in the relationship between human beings and tools.

And even today, we may still not have fully adapted to that relationship.