The Age of Agents: Everyone Should Have an AI Digital Employee

What You Really Need May Not Be a Super Agent

In January 2025, OpenAI took the lead by launching Operator. People rushed in and asked it to book restaurants and fill out forms, only to find that it could not even handle a date picker. Two months later, Manus invite codes were briefly bid up to over ten thousand yuan on a trading platform. Then in July, ChatGPT Agent officially launched: Pro users got 400 calls per month, while Plus users had only 40, and if that was not enough, they had to pay more.

Within half a year, three heavyweight Agent products appeared one after another. Everyone rushed to try them, and everyone complained that they were not very good.

But it is worth calming down and asking yourself: what kind of Agent do you actually need?

Do you need an all-around performer that can automatically compare the prices of 100 pairs of sneakers while also generating a poster design proposal? Or a super assistant that can book that impossible-to-reserve restaurant for Friday night?

Probably neither.

What most people really need from an Agent is actually much simpler.

Before you open your computer every morning, someone has already organized the major developments in your industry from the night before. Before the Monday meeting every week, someone has already pulled last week’s data and laid it out neatly. Before every weekly report, someone has already turned this week’s work logs into a first draft.

These needs are not cool. They are not sexy. They would never make it onto a launch-event demo.

But they share one thing in common: they are repetitive, fixed, and personal. No company is going to build a product specifically for your morning reading habits, and no general-purpose Agent can precisely understand what “the industry you care about” really means.

In other words, you do not need an all-powerful Super Agent. What you need is a Mini Agent that does exactly one thing for you, and does it on time every day.

And that is something you can build yourself right now.

What Exactly Is an Agent

Before becoming the hottest idea in tech, Agents had already been quietly sitting in academia for decades.

The word “Agent” comes from the Latin “Agere,” which means “to act” or “to do.”

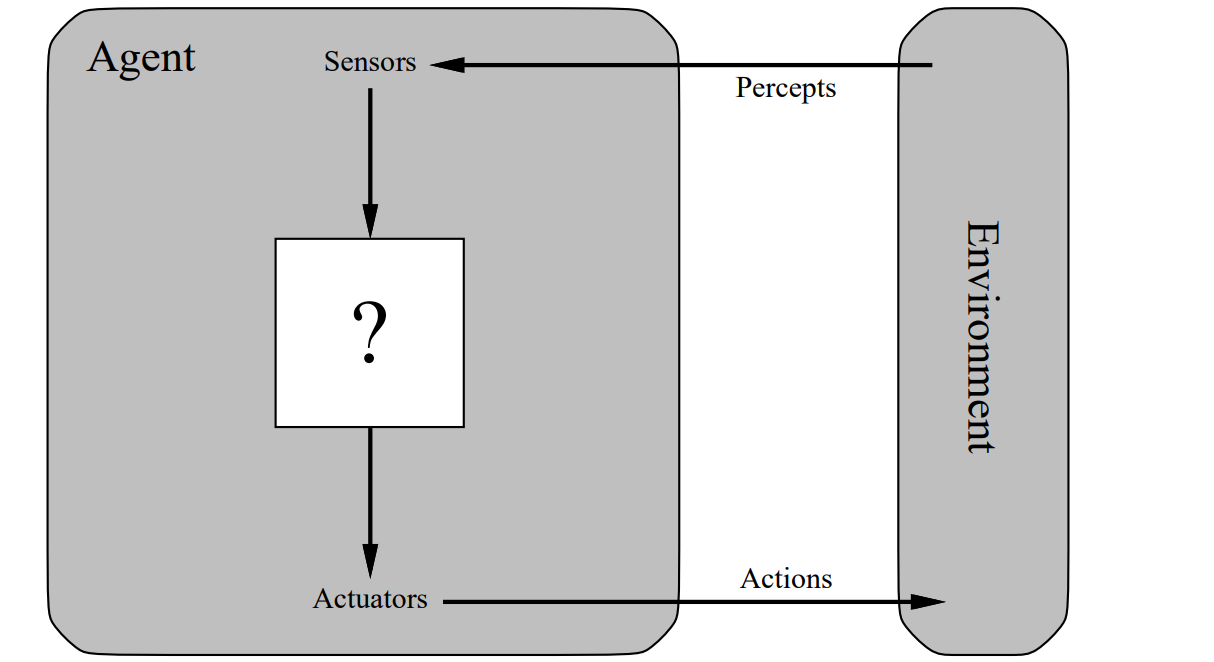

In AI, it has a classic academic definition: an entity that can perceive its environment, make autonomous decisions, and take action. In Artificial Intelligence: A Modern Approach, Stuart Russell and Peter Norvig offer a more precise description: an Agent is anything that perceives its environment through sensors and acts upon that environment through actuators.

Agents interact with environments through sensors and actuators

Take a simple example: the robot vacuum in your home is an Agent. It perceives the layout of the room through sensors (perception), decides whether to clean the living room first or the bedroom first (decision-making), and then goes off to clean on its own (action). The whole process does not require you to stand there with a remote control and guide it step by step.

But if this definition has existed for decades, why did Agents suddenly become the hottest concept in 2024 and 2025?

The answer lies not in the definition itself, but in a missing piece of the puzzle finally being added.

Traditional Agents, whether robot vacuums or robotic arms on industrial assembly lines, had extremely limited “decision-making” ability. They either relied on preset rules or statistical models, and the moment they encountered something outside those rules, they were lost. They could be “automatic,” but not really “intelligent.”

The arrival of large language models gave Agents a real “brain” for the first time.

That brain can understand natural language, carry out multi-step reasoning, and flexibly adjust its strategy based on context. Once it is connected to tool use, web interaction, code execution, and other capabilities, an Agent appears that can understand what you say, figure out how to do it, and use whatever tools it needs to get the job done.

So if you had to use one sentence to distinguish the two concepts people most often confused in 2025:

A chatbot answers your questions. An Agent takes your goal and does the work.

If you tell ChatGPT, “Help me write an email,” it gives you a block of text and then waits for you to copy, paste, and send it yourself. That is a chatbot.

If you tell ChatGPT Agent, “Help me send my boss a leave request from Friday to next Monday because I have family matters to take care of,” it opens Gmail on its own, writes the message, fills in the recipient, and then asks you, “Do you want to send it?” That is an Agent.

One generates. The other gets things done. That is the new soul large language models have injected into the old concept of the Agent.

The History of Agents

Looking back, the evolution of Agents has gone through three clear waves. Each one lowered the same threshold: how much technical knowledge do you need in order to let an Agent work for you?

The earliest Agents that worked for people were not actually called Agents. They were called scripts, macros, or RPA (robotic process automation).

The logic was simple and blunt: you told it, “If an email arrives with ‘invoice’ in the subject line, automatically download the attachment and save it to a designated folder,” and it would obediently do exactly that. RPA tools like UiPath and Blue Prism were once the backbone of enterprise automation, helping banks, insurers, and finance departments save huge amounts of labor.

But they had one fatal limitation: every single step had to be defined by a human. The moment the workflow changed, the rules stopped working. They could execute, but they could not understand. They were like precision assembly lines: as long as the product specification stayed the same, efficiency was extremely high; the moment the model changed, the whole line had to be readjusted.

In March 2023, less than three weeks after GPT-4 was released, an open-source project called AutoGPT burst onto the scene. Within a month, it had gained more than 100,000 stars on GitHub, and it remains one of the fastest-growing projects in the platform’s history.

Its idea was exciting: give AI a goal, and it would break the task down on its own, call tools on its own, and execute on its own, with no human intervention needed the entire time. Similar projects such as BabyAGI and MetaGPT also appeared during the same period.

But it did not take long for everyone to notice one problem: they were just too unreliable.

AutoGPT often veered off course by the third or fourth step, got stuck in infinite loops, or made baffling decisions. You might ask it to research an industry report, and it would spend the first 20 minutes trying to register a new email account.

Academia uses the term “hallucination” to describe how large language models can confidently make things up, but the problem with this wave of Agents was even more direct: they confidently went off and did the wrong work.

The concept was ahead of its time, but the engineering maturity was nowhere close. This wave came quickly and faded quickly, and its greatest legacy was not a fully usable product, but a direction that had been validated.

The path of large-language-model-driven Agents was the right one. It just had not reached its destination yet.

The real turning point came in 2025.

First, Manus exploded across social media in March with a series of stunning demos. It showed a complete chain in which an Agent could understand a task, break it into steps, call a browser and code executor, and deliver a final result. That feeling of “watching it work step by step” gave users a sense of transparency, and for the first time, people felt that Agents were truly usable.

Then OpenAI merged Operator and Deep Research into ChatGPT in the middle of the year and launched a unified ChatGPT Agent. It could operate a browser, run code, call APIs, and even let users interrupt and revise tasks halfway through. It evolved from merely “being able to do the work” to “being able to collaborate.”

OpenAI|Introducing ChatGPT Agent

What fundamentally set this wave apart from the previous two was that Agents were no longer proofs of concept in a lab. They had become products people could actually pay to use. They started to have stability, user-experience design, and business models.

And the tools for building Agents also matured rapidly during this same year.

Developer platforms such as Claude Code and Cursor are lowering the barrier to “building an Agent” from “you need to be a programmer” to “you just need to be able to clearly describe what you want.”

And at the beginning of 2026, OpenClaw confirmed this trend from another direction.

This project started out as nothing more than a developer’s weekend side project, yet in just two months it gained more than 100,000 GitHub stars. What it did was not complicated: it let you run a private AI assistant on your own device, connect it to mainstream chat tools like Telegram and Slack, and keep it on standby at all times.

No launch event. No fundraising deck. One person, one weekend, one open-source project, and that was enough to create an Agent product used by hundreds of thousands of people.

Three waves, three barriers lowered. From “you need to know how to code to automate things” to “if you can talk, you can build an Agent.”

We are standing at the start of the third wave.

Building a Daily Ai News Agent with Claude Code

Next, I will demonstrate how to build a Daily Ai News Agent with Claude Code.

What this Agent does is very simple: every day, on a schedule, it fetches the information sources you specify, filters out content related to the AI industry, and organizes it into a briefing in the format you want.

Open Windows PowerShell

Install Claude Code

1 | irm https://claude.ai/install.ps1 | iex |

For installation commands on other operating systems, see Claude Code Docs

Install Git

1 | winget install --id Git.Git -e --source winget |

Navigate to the project folder

1 | cd your-project-path |

Initialize Claude Code

1 | claude |

Install Claude Code related plugins

1 | # Install the long-term memory plugin |

Download the Agent requirements document and place it in the project root directory

Agent Requirements Document

Have Claude Code read the Agent requirements document and execute it

1 | Read `daily-ainews-agent.md` in the current directory and strictly follow the document requirements to build the Daily Ai News Agent. |

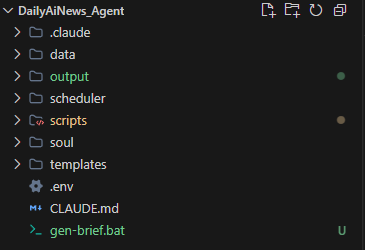

After Claude Code finishes executing, the project directory structure will look like this

Start the Agent

1 | ./gen-brief.bat |