Anthropic CEO: Scaling Will Continue, AGI Is Two Years Away, and What I Fear Most Isn't Rogue AI but Humans Abusing Power

If you had to pick one person to explain what “AI safety” actually means, why it matters, and why it doesn’t conflict with “making AI more powerful,” Dario Amodei is the obvious choice.

Ten years ago, he was working under Andrew Ng at Baidu on speech recognition, one of the earliest heretics who bet that “just making the model bigger” would work. He later led the research direction on GPT-2, GPT-3, and RLHF at OpenAI, then walked out with a group of collaborators to found Anthropic and build Claude. Anthropic’s latest announcement puts its annualized revenue at $30 billion, five billion more than his former employer OpenAI.

The most counterintuitive thing about him is that he’s the AI industry’s loudest CEO on the topic of risk, and at the same time, its most committed believer in Scaling. He doesn’t see these two as contradictory. He sees them as two sides of the same coin.

Recently, the well-known podcast host Lex Fridman sat down with Dario Amodei for a nearly three-hour in-depth interview.

This is the most complete and candid public statement Dario Amodei has given to date, a man holding both the gas pedal and the brake, trying to explain why both pedals have to be there at once.

In the Past Decade, Scale Has Won Almost Every Time

The interview opens with Fridman cutting straight to scaling laws. Dario starts from his own experience.

In 2014, he joined Baidu’s AI lab, and as a newcomer, he did something absurdly simple: make the speech recognition neural network bigger, feed it more data, train it longer. The model just kept getting better.

“Everyone was always saying, ‘We don’t have the algorithms we need to succeed. We haven’t found the picture of how to match the human brain.’ But I was a newcomer. I looked at the neural net we were using for speech and said, ‘What if you make them bigger and give them more layers? And what if you scale up the data along with this?’ I just saw these as independent dials that you could turn. And the models started to do better and better as you gave them more data, as you made the models larger, as you trained them for longer.”

In 2017, the results from GPT-1 convinced him completely: language was a domain that could scale indefinitely. Trillions of words of text, paired with ever larger models, would keep producing capability gains.

Ilya Sutskever said something to him back then that Dario says has explained a thousand things he’s seen since: “The thing you need to understand about these models is they just want to learn. The models just want to learn.”

Some said models could only learn syntax, not semantics.

Some said sentences could be made to cohere, but paragraphs never would.

Now the refrain is “we’re running out of data” and “models can’t really reason.”

Dario says, these obstacles kept appearing, and scaling kept finding a way around them.

Scaling Laws for Neural Language Models

“I’m now at this point where I still think it’s always quite uncertain. We have nothing but inductive inference to tell us that the next two years are going to be like the last 10 years. But I’ve seen the movie enough times, I’ve seen the story happen enough times to really believe that probably the scaling is going to continue, and that there’s some magic to it that we haven’t really explained on a theoretical basis yet.”

Why Does Claude Sometimes “Get Dumber”?

Fridman brings up a classic Reddit complaint: users keep feeling Claude gets dumber the more they use it. What’s going on?

Dario first clarifies: the model’s weights, its “brain,” do not change unless a new version is released.

“It’s difficult from an inference perspective and it’s actually hard to control all the consequences of changing the weights of the model.”

He acknowledges two exceptions: small-scale A/B tests around a release, and occasional tweaks to the system prompt. But these are rare, while the complaints are constant, not just about Claude but about GPT-4 as well.

His theory is that models are extremely sensitive to phrasing. Asking “do task X” last night and “can you do task X” this morning can produce wildly different results. Stack that on top of psychological adaptation, and a new model that felt magical at launch soon only shows its flaws.

“When people first got Wi-Fi on airplanes, it was amazing, magic. And now I’m like, ‘I can’t get this thing to work. This is such a piece of crap.’”

The “Certainly” Eval and the Whack-a-Mole Problem

On shaping Claude’s personality, Dario admits it’s an endless game of whack-a-mole.

There was a version of Claude that kept saying “Certainly.” “Certainly, I can help you with that. Certainly, I would be happy to do that. Certainly, this is correct.”

The team built a dedicated “certainly” eval to track it. But the problem is, the moment you fix “certainly,” the model may start saying “definitely” instead.

The deeper tension is this: you try to cut down on verbosity, and the model starts writing lazy code with a “rest of the code goes here.” You try to get it to stop refusing reasonable requests, and its guardrails in dangerous domains loosen too.

“There’s this whack-a-mole aspect where you push on one thing and these other things start to move as well that you may not even notice or measure. This version we’re seeing today of you make one thing better, it makes another thing worse, I think that’s like a present day analog of future control problems in AI systems.”

AI Safety Levels: An Alarm System That Isn’t Yet Triggered but Is Getting Close

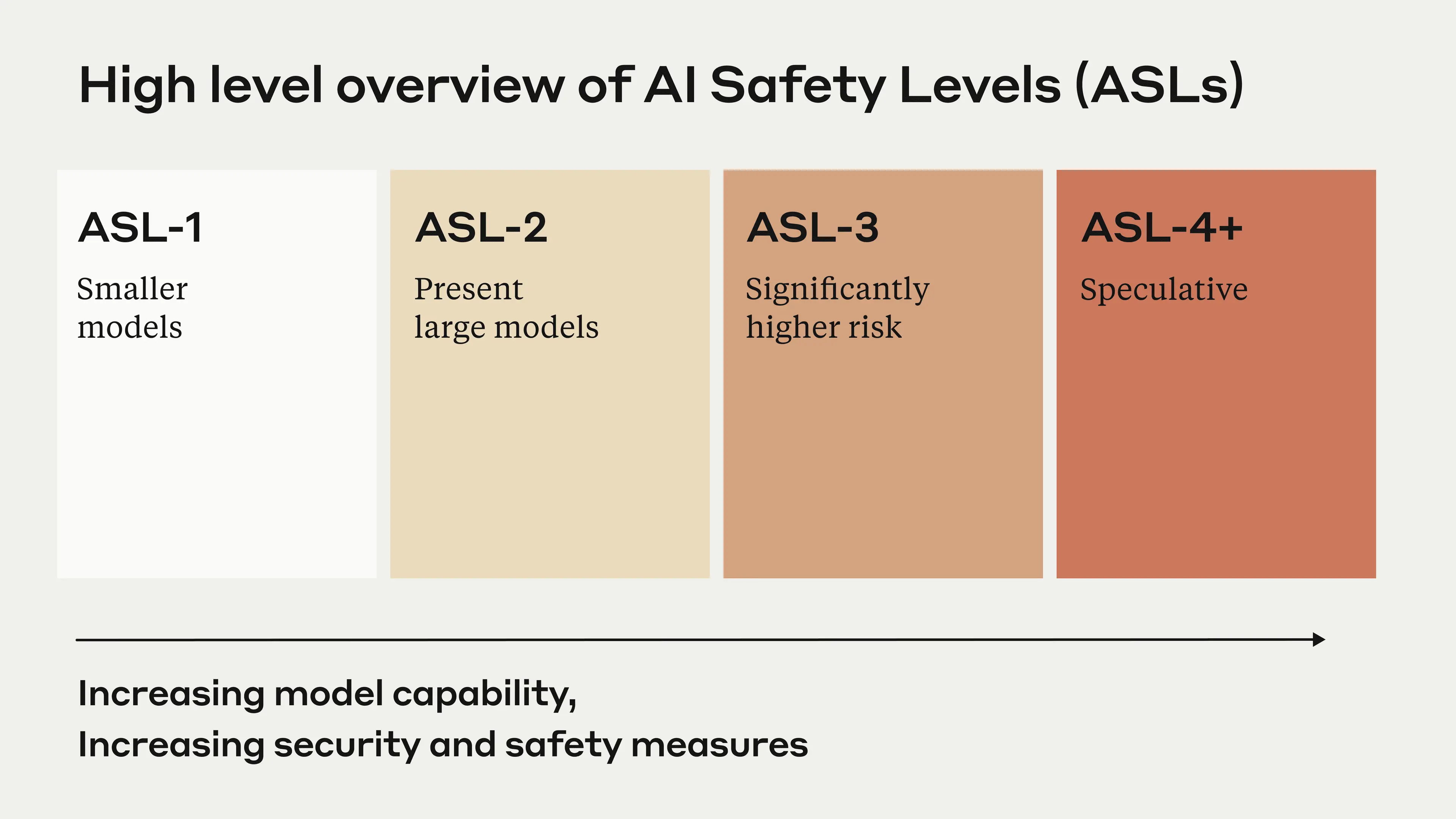

Dario spends a lot of time explaining Anthropic’s RSP (Responsible Scaling Policy) and ASL (AI Safety Level) framework. The core logic is an “if-then” structure: if the model passes a particular dangerous capability test, then a corresponding set of safety measures kicks in.

ASL-1: Chess-playing bots and the like, manifestly no threat. “No one’s going to use Deep Blue to conduct a masterful cyber attack.”

ASL-2: Where all of today’s frontier models sit. They can provide some information beyond a search engine, but not enough to help someone build a dangerous weapon end-to-end.

ASL-3: The model substantially enhances the capabilities of non-state actors. At this level, enhanced security is required to prevent model theft, and deployment needs domain-specific filters. Dario says he “would not be surprised at all if we hit ASL-3 next year.”

ASL-4: The model may be smart enough to sandbag tests and hide its real capabilities. At this point, talking to the model is no longer enough, you need mechanistic interpretability and other techniques to verify the model’s state from the inside.

On regulation, Dario is unambiguous.

“I really think we need to do something in 2025. If we get to the end of 2025 and we’ve still done nothing about this, then I’m going to be worried. I’m not worried yet because, again, the risks aren’t here yet, but I think time is running short.”

Why Did You Leave OpenAI?

Fridman asks the question everyone is curious about. Dario’s answer is restrained, but clear.

Sam Altman and Dario Amodei refuse to hold hands

“People say we left because we didn’t like the deal with Microsoft. False. We left because we didn’t like commercialization. That’s not true. We built GPT-3, which was the model that was commercialized. I was involved in commercialization.”

The core disagreement was about how.

How do you bring extraordinarily powerful AI into the world with caution, integrity, and transparency? Safety can’t just be a recruiting slogan.

“If you have a vision for how to do it, you should go off and you should do that vision. It is incredibly unproductive to try and argue with someone else’s vision. What you should do is you should take some people you trust and you should go off together and you should make your vision happen. And if your vision is compelling, people will copy it. Imitation is the sincerest form of flattery.”

He calls this the “race to the top.”

If you can show that doing things responsibly still wins the market, other companies will follow. That’s a hundred times more effective than arguing with your boss.

“It doesn’t matter who wins in the end as long as everyone is copying everyone else’s good practices.”

Talent Density Beats Talent Mass

Asked how to build a top AI team, Dario offers a principle he says “feels more true every month,” talent density beats talent mass.

A hundred extremely smart, mission-aligned people beat a thousand people where two hundred are top-tier but the other eight hundred are random big-tech employees. Because when every person looks around and sees only top-tier colleagues, trust and mission self-reinforce. Otherwise you need endless process and procedure to compensate for the missing trust.

After scaling from 300 to 800 people, Anthropic deliberately slowed hiring. They hired a lot of theoretical physicists, who “can learn things really fast.”

“If your company consists of a lot of different fiefdoms that all want to do their own thing, it’s very hard to get anything done. But if everyone sees the broader purpose of the company, if there’s trust and there’s dedication to doing the right thing, that is a superpower. That in itself can overcome almost every other disadvantage.”

The First Quality of a Top AI Researcher Is an Open Mind

Dario says he isn’t the best programmer, isn’t the best at finding bugs, isn’t the best at writing GPU kernels. But he has one thing: a willingness to look at problems with fresh eyes.

“This neural net has 30 million parameters. What if we gave it 50 million instead? Let’s plot some graphs. This wasn’t PhD level experimental design, this was simple and stupid. Anyone could have done this if you just told them that it was important. But you put the two things together and some single digit number of people have driven forward the whole field by realizing this.”

His advice to young people: first, just start playing with the models; second, work on directions nobody is working on.

“Mechanistic interpretability, there are probably 100 people working on it, but there aren’t 10,000 people working on it. Skate where the puck is going.”

Machines of Loving Grace

This long essay is Dario’s most widely read piece of writing. He explains why he wrote it.

Anthropic spends so much time talking about risk that the mind only thinks about risk. But the whole point of addressing risk is that if we can get through the minefield, there is something extraordinarily good on the other side worth fighting for.

“If you only talk about risks, your brain only thinks about risks. And so, I think it’s actually very important to understand, what if things do go well? The whole reason we’re trying to prevent these risks is not because we’re afraid of technology, not because we want to slow it down. It’s because if we can get to the other side of these risks, if we can run the gauntlet successfully, then on the other side of the gauntlet are all these great things.”

He dislikes the word “AGI,” which suggests a discrete jumping-off point, when capability growth is actually a smooth exponential curve.

“It’s a little like if it was 1995 and Moore’s law was making computers faster. And everyone was like, ‘Well, someday we’re going to have supercomputers. Once we have supercomputers, we’ll be able to sequence the genome.’ But there’s no point at which you pass the threshold and you’re doing a totally new type of computation. I feel that way about AGI.”

On how fast AI can change the world, he rejects both extremes.

AI builds a stronger AI, which builds a stronger AI still, and in five days nanobots cover the Earth. Dario says this ignores the laws of physics, complexity, and the friction of human institutions.

Like every technology revolution before, productivity gains will be disappointingly slow, perhaps 50 to 100 years.

Dario’s judgment sits in the middle, leaning optimistic: 5 to 10 years, not 5 to 10 hours, not 50 to 100 years.

“The barriers are going to fall apart gradually and then all at once.”

In biology, AI will first work like a “super grad student”: reading the literature, designing experiments, analyzing data, ordering equipment. A Nobel Prize-level biology professor who used to lead 50 grad students will soon be able to direct 1,000 AI grad students, each smarter than the professor.

Then at some point, the roles flip: the AI becomes the PI, directing humans and other AIs.

“Can we get everything that was going to happen from here to 2100 to instead happen from 2027 to 2032?”

When Will AGI Arrive? “2026 or 2027”

Before giving that number, Dario lays down a lot of caveats and disclaimers.

“If I say 2026 or 2027, there will be a zillion people on Twitter who will be like, ‘AI CEO said 2026,’ and it’ll be repeated for the next two years. Whoever is extracting these clips will crop out the thing I just said and only keep what I’m about to say. But I’ll just say it anyway.”

“If you extrapolate the curves that we’ve had so far, we’re starting to get to PhD level, last year we were at undergraduate level, modalities are still being added. If you just eyeball the rate at which these capabilities are increasing, it does make you think that we’ll get there by 2026 or 2027.”

“I think the most likely is that there are some mild delays relative to that. I also think the number of worlds where this doesn’t happen is rapidly decreasing. We are rapidly running out of truly convincing blockers.”

He stresses that scaling laws are not laws of the universe. They are empirical regularities, just like Moore’s law.

“People call them scaling laws. That’s a misnomer. Like Moore’s law is a misnomer. They’re not laws of the universe. They’re empirical regularities. I am going to bet in favor of them continuing, but I’m not certain.”

Programming Will Be Disrupted First, but the Role Won’t Disappear

Dario thinks programming will be the fastest field to be disrupted by AI.

Two reasons: one, programming is closest to the people building AI; two, programming closes the loop, the model writes code, runs it, sees the result, and iterates.

“On typical real-world programming tasks, models have gone from 3% in January of this year to 50% in October of this year. I would guess that in another 10 months, we’ll be at least 90%.”

But he doesn’t think programmers will be out of work. Comparative advantage will kick in: when AI can do 80% of a coder’s job, the remaining 20% of high-level architecture, UX judgment, and system-level oversight becomes more leveraged. The human role shifts from “writing code line by line” to something more macroscopic.

“Someday AI will be better at everything and that logic won’t apply, and then humanity will have to think about how to collectively deal with that. We’re thinking about that every day.”

On Meaning: “I’m Optimistic About Meaning. I Worry About Power”

At the end, Fridman asks the big question: in a world where AI keeps getting more powerful, where does human meaning come from?

Dario’s answer is unexpectedly philosophical.

He offers a thought experiment: if you lived 60 years in a simulated world, made moral choices, sacrificed things, and then were told the whole thing was a game, would that really rob it of meaning?

“It seems like the process is what matters and how it shows who you are as a person along the way, how you relate to other people and the decisions that you make along the way. Those are consequential.”

He says he’s optimistic about meaning. What actually keeps him up at night is something else: the concentration and abuse of power.

“I am optimistic about meaning. I worry about economics and the concentration of power. That’s actually what I worry about more, the abuse of power.”

“When things have gone wrong for humans, they’ve often gone wrong because humans mistreat other humans. That is the thing I worry about most, the concentration of power, structures like autocracies and dictatorships where a small number of people exploits a large number of people.”

Lex says: “AI increases the amount of power in the world. And if you concentrate that power and abuse that power, it can do immeasurable damage.”

Dario pauses, then repeats himself:

“Yes, it’s very frightening. It’s very frightening.”

Maybe the worst future isn’t a robot uprising.

It’s an already unfair world suddenly handed a near-godlike tool.

Coda

At the end of the nearly three-hour conversation, Dario says something that makes the perfect footnote for the whole thing.

“If there’s one message I want to send, it’s that to get all this stuff right, to make it real, we both need to build the technology, build the companies, the economy around using this technology positively, but we also need to address the risks because those risks are in our way. They’re landmines on the way from here to there, and we have to diffuse those landmines if we want to get there.”

A man holding both the gas pedal and the brake, not out of indecision, but because he knows better than anyone else how fast the road ahead is, and how sharp the turns are.